| Channel | Publish Date | Thumbnail & View Count | Download Video |

|---|---|---|---|

| | Publish Date not found |  0 Views |

We can take small steps to help close that.

Speech to text and translators have made things a lot easier.

But what about those who may not speak or cannot hear?

What's with them?

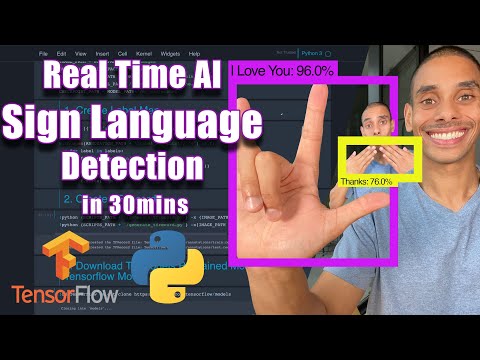

Well… you can start using Tensorflow Object Detection and Python to bridge that gap. And in this video you'll learn how to take the first steps to do just that! In this video, you'll learn how to build an end-to-end custom object detection model that allows you to translate sign language in real time.

In this video you will learn how to:

1. Collect images for deep learning using your webcam and OpenCV

2. Label images for sign language detection with LabelImg

3. Set up configuration of the Tensorflow Object Detection pipeline

4. Use transfer learning to train a deep learning model

5. Detect sign language in real time with OpenCV

Download the training template here: https://github.com/nicknochnack/RealTimeObjectDetection

Other links mentioned in the video

Face mask detection video: https://youtu.be/IOI0o3Cxv9Q

LabelImg: https://github.com/tzutalin/labelImg

Install the Tensorflow Object Detection API: https://tensorflow-object-detection-api-tutorial.readthedocs.io/en/latest/install.html

Oh, and don't forget to connect with me!

LinkedIn: https://www.linkedin.com/in/nicholasrenotte

Facebook: https://www.facebook.com/nickrenotte/

GitHub: https://github.com/nicknochnack

Happy coding!

Nick

Ps Let me know how it goes and leave a comment if you need any help!

Please take the opportunity to connect and share this video with your friends and family if you find it helpful.